Just as I previously blogged about running your own

UEC on top of EC2 (cloud on cloud), here is another cloud on cloud post showing you how to run an OpenStack compute development environment on top of EC2. All of the heavy lifting is really done by the awesome

novascript! I started by launching Ubuntu server 10.10 64bit (ami-688c7801) on a m1.large instance. If you're not sure how to get this done, please check my

visual pointnclick guide to launching Ubuntu VMs on EC2

Once ssh'ed into my Ubuntu server instance I fire an update

sudo -i

apt-get update && apt-get dist-upgrade

Let's see the available ephemeral storage

root@ip-10-212-187-80:~# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/sda1 9.9G 579M 8.8G 7% /

none 3.7G 120K 3.7G 1% /dev

none 3.7G 0 3.7G 0% /dev/shm

none 3.7G 48K 3.7G 1% /var/run

none 3.7G 0 3.7G 0% /var/lock

/dev/sdb 414G 199M 393G 1% /mnt

As you can see, /mnt is auto-mounted for us. We don't really need this. For nova (OpenStack compute component) to start it needs an LVM setup with a LVM volume group called "nova-volumes", so we unmount that /mnt and use sdb for our LVM purposes

# umount /dev/sdb

# apt-get install lvm2

root@ip-10-212-187-80:~# pvcreate /dev/sdb

Physical volume "/dev/sdb" successfully created

root@ip-10-212-187-80:~# vgcreate nova-volumes /dev/sdb

Volume group "nova-volumes" successfully created

root@ip-10-212-187-80:~# ls -ld /dev/nova*

ls: cannot access /dev/nova*: No such file or directory

root@ip-10-212-187-80:~# lvcreate -n foo -L1M nova-volumes

Rounding up size to full physical extent 4.00 MiB

Logical volume "foo" created

root@ip-10-212-187-80:~# ls -ld /dev/nova*

drwxr-xr-x 2 root root 60 2010-11-10 10:27 /dev/nova-volumes

I had to create an arbitrary volume named "foo" just to get /dev/nova-volumes to be created. If there's some other better way, let me know folks. Let's go checkout the novascript. You need to do that somewhere that has more open permissions than /root :) so /opt is perhaps a good choice

# cd /opt

# apt-get install git -y

# git clone https://github.com/vishvananda/novascript.git

Initialized empty Git repository in /opt/novascript/.git/

remote: Counting objects: 121, done.

remote: Compressing objects: 100% (114/114), done.

remote: Total 121 (delta 42), reused 0 (delta 0)

Receiving objects: 100% (121/121), 16.62 KiB, done.

Resolving deltas: 100% (42/42), done.

From here, we simply follow the novascript instructions to download and install all components

# cd novascript/

# ./nova.sh branch

# ./nova.sh install

# ./nova.sh run

Watch huge amounts of text scroll by as all components are installed. The final "run" line starts a "GNU/screen" session with all nova components running in screen windows. That is just awesome! For some reason though, my first run was unsuccessful. I had to detach from screen, ctrl-c kill it. I then tried starting the nova-api component manually, which did work fine! I then tried to run the script again, and strangely enough this time it worked flawlessly. Probably an initialization thing only. Thought I'd mention this in case any of you guys face this issue. Here's what I did, which you may or may not have to do

./nova/bin/nova-api --flagfile=/etc/nova/nova-manage.conf

# ./nova.sh run # works this time .. duh

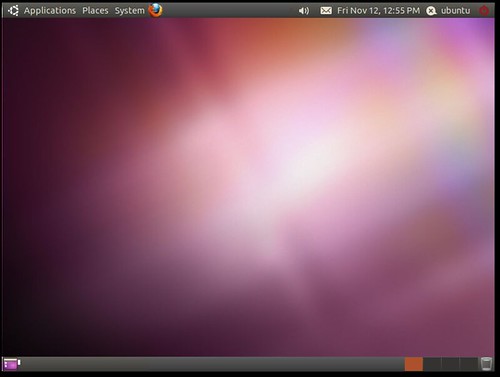

Almost there! Nova's components are now running inside screen. You're dropped into screen window number 7. From there we proceed to create some keys, launch a first instance and watch it spring to life

# cd /tmp/

# euca-add-keypair test > test.pem

# euca-run-instances -k test -t m1.tiny ami-tiny

RESERVATION r-yehvnkwa admin

INSTANCE i-3fxfo2 ami-tiny 10.0.0.3 10.0.0.3 scheduling test (admin, None) 0 m1.tiny 2010-11-10 10:50:27.337898

# euca-describe-instances

RESERVATION r-yehvnkwa admin

INSTANCE i-3fxfo2 ami-tiny 10.0.0.3 10.0.0.3 launching test (admin, ip-10-212-187-80) 0 m1.tiny 2010-11-10 10:50:27.337898

# euca-describe-instances

RESERVATION r-yehvnkwa admin

INSTANCE i-3fxfo2 ami-tiny 10.0.0.3 10.0.0.3 running test (admin, ip-10-212-187-80) 0 m1.tiny 2010-11-10 10:50:27.337898

Let's ssh right in

# chmod 600 test.pem

# ssh -i test.pem root@10.0.0.3

The authenticity of host '10.0.0.3 (10.0.0.3)' can't be established.

RSA key fingerprint is ab:96:c3:ee:22:84:28:2f:77:ad:d9:a9:52:63:7c:f9.

Are you sure you want to continue connecting (yes/no)? yes

Warning: Permanently added '10.0.0.3' (RSA) to the list of known hosts.

--

-- This lightweight software stack was created with FastScale Stack Manager

-- For information on the FastScale Stack Manager product,

-- please visit www.fastscale.com

--

-bash-3.2# #Yoohoo nova on ec2

Once you detach from screen, all nova services are killed one by one to clean things up. On that setup, you can immediately hack on the code, then re-launch nova components to see the effect. You can use bzr to update the codebase and so on. In case you're wondering if this works on KVM on your local machine, it does work beautifully! Of course instead of the LVM setup on the ephemeral storage step, you'd have to pass a second KVM disk to the VM. Other than that, it's about the same. How awesome is that. Let me know guys if you have any questions or comments, also feel free to jump on IRC on #ubuntu-cloud and grab me (kim0). Have fun